AI agents are no longer a future concept — they’re arriving fast, and the numbers are striking. Gartner predicts that 40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026, up from less than 5% today. McKinsey finds that 62% of organizations are either experimenting with or actively scaling AI agents somewhere in their enterprise. And according to Deloitte, agentic AI usage is poised to rise sharply in the next two years — but oversight is lagging, with only one in five companies having a mature governance model in place.

That last statistic is the one that should concern every IT decision maker.

The Promise — and the Problem

AI agents are genuinely powerful. Unlike a chatbot that answers questions, an agent acts. It can access your systems, retrieve data, execute workflows, and make decisions — autonomously, repeatedly, at scale. The efficiency gains are real.

But so is the risk. An agent that can access your CRM can also exfiltrate data. An agent that automates procurement can run up costs without authorisation. An agent that operates outside a defined scope can take actions no one intended. And just as concerning, an agent that isn’t properly scoped can surface sensitive information to the wrong people — a sales rep seeing a colleague’s deals and compensation data, a contractor accessing customer records they have no business viewing, or a cross-functional agent inadvertently exposing HR or financial data to anyone who thinks to ask. In most organizations today, there is no centralized view of what agents are running, what they’re doing, or what they’re allowed to do. That’s not a technology problem — it’s a governance gap.

Three Layers. One Control Plane.

To understand the governance challenge, it helps to understand how agents actually work. An agent doesn’t just think — it needs to reach out into the world, gather information, reason about it, and then act. That journey involves three distinct layers, each playing a specific role.

MCP (Model Context Protocol) — the connectivity layer. First, the agent needs information and the ability to act — to query a database, read a file, send a message, trigger a workflow. MCP is a standardized protocol that connects the agent to your tools and systems: your CRM, analytics platforms, communication tools, internal APIs. Think of it as USB-C for AI integrations — one universal standard that lets agents plug into any connected system. Each MCP server does one thing well: query this, write that, retrieve the other.

The AI Model — the reasoning layer. But raw data and tool access aren’t enough. The agent also needs to reason — to interpret what it finds, draw conclusions, decide what matters, and generate a useful output. That’s the role of the AI Model — Claude, GPT, Gemini. The model is the brain: stateless, powerful, and completely dependent on what it’s given to work with. Without MCP, it has no access to your systems or the data that lives in them.

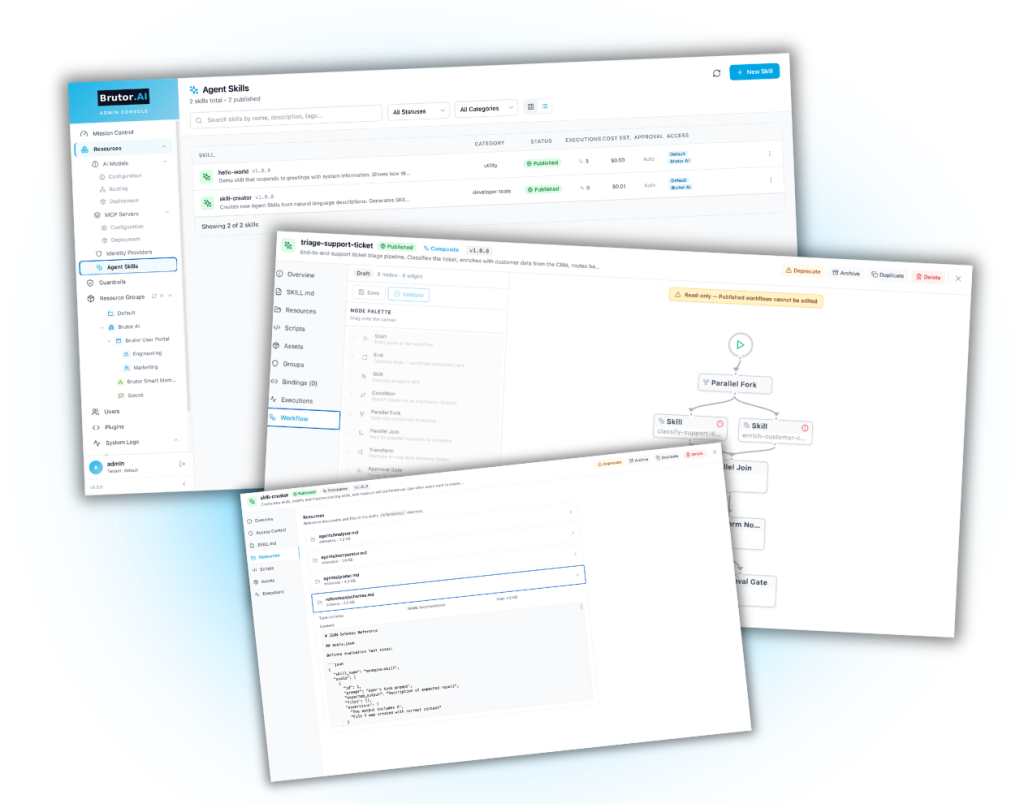

Agent Skills — the orchestration layer. The third layer is what turns isolated capability into something an organization can actually rely on. The agent needs a playbook — a defined, repeatable way of combining model reasoning with tool access to accomplish a specific task, consistently, every time. That’s what Agent Skills provide. A skill is a pre-built, versioned, self-contained workflow that orchestrates multiple model calls, multiple tool invocations, branching logic, and error handling into a single governed capability. If MCP servers are the individual instruments and AI models are the musicians, skills are the sheet music (apologies for the mixed metaphors!) — the scored arrangements that turn raw capability into a reliable, repeatable performance.

And When Agents Need to Talk to Other Agents

Most of the agent stories in 2026 are still single-agent: one agent, calling models and tools, doing one job. But the next wave is multi-agent — specialised agents that delegate to each other. A “research agent” calls a “summarisation agent” which calls a “fact-check agent.” Each one has its own scope, its own permissions, its own audit trail.

The industry standard for this is A2A (Agent-to-Agent) — an open protocol that lets agents discover each other, negotiate capabilities, stream responses, and delegate tasks across multiple hops. Brutor implements the A2A v1.0 specification natively in the gateway: every agent your team builds can publish itself as A2A-compliant with a one-line config change, and every A2A call inherits the same governance, observability, and cost attribution as a regular LLM call. No second control plane to operate. No bespoke glue code between agents. The agents talk to each other through the gateway, and the gateway makes sure they only do what they’re allowed to.

The result: when one agent needs another agent’s capability, the cost still attributes back to the calling team, the audit trail still records the multi-hop delegation, and the same RBAC rules still apply. Your CFO sees the spend on agent-to-agent traffic the same way they see token spend on a single chat reply.

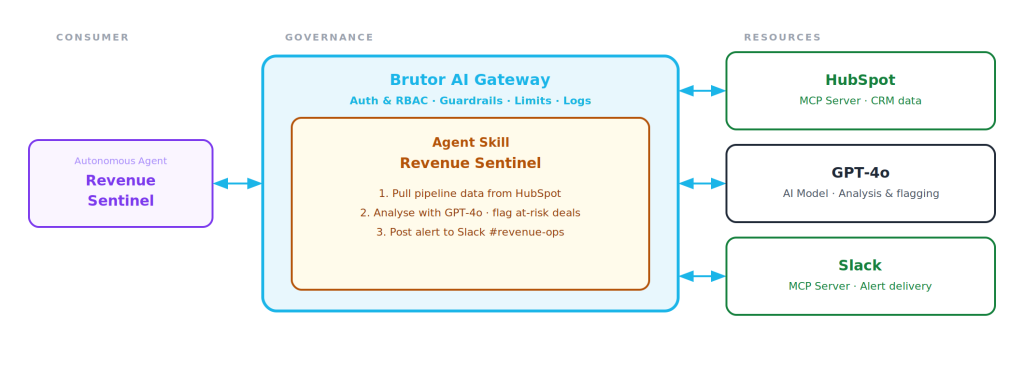

The Revenue Sentinel: A Real-Life Autonomous Agent Example

Let’s say you want to build an autonomous agent that watches over your sales pipeline and automatically alerts the team to deals that are stalling or at risk — let’s call it the “Revenue Sentinel.” It’s representative of dozens of agent patterns Brutor’s customers run on the platform — others include ticket triage, contract review, customer-research synthesis, and onboarding workflows. It’s the kind of agent just about every enterprise would benefit from, and that until recently would have been handled through some combination of manual reporting, scheduled exports, and someone writing up a summary every week.

The Revenue Sentinel autonomous agent would automate such process. It would pull deal and pipeline data from a CRM system like HubSpot, then run an AI analysis using GPT-4o to identify which deals need attention, and delivers the alert straight to your dedicated revenue ops Slack channel — every week, without anyone lifting a finger. Your sales team starts Monday knowing exactly where to focus their efforts.

How Does Brutor AI Platform Help?

Brutor AI Platform is the governance and control layer that sits between your people, your agents, and the AI services they depend on. Every resource type — models (text, image, voice, video), MCP servers, agent skills, and A2A traffic between agents — is proxied through the gateway, which means every call passes through the same governance layer: authentication, role-based access control, guardrails, three-state HITL approval, rate limits, cost caps, full observability, and Policy-as-Code that ships with your code instead of drifting in a wiki.

For an agent like Revenue Sentinel, Brutor manages the skill server-side — stored, versioned, and centrally governed, not scattered across developer machines or chat sessions. You define the workflow once, publish an immutable version, and every authorised team in your organization runs it the same way, every time, through the gateway.

Setting this up in the Brutor AI Platform is straightforward. You connect HubSpot and Slack as MCP servers, define the Revenue Sentinel skill — what data to pull, what to analyze, where to send the output, what happens in case of errors — and assign it to your agent along with who can run it. The gateway then handles execution, guardrails, logging, and budget enforcement automatically. No deals slip through unnoticed, no one has to chase a report, and your IT team has full visibility into what the agent accessed and when.

Let’s summarize how your organization benefits by using Brutor AI Platform to manage Revenue Sentinel (and any other agent you build afterwards…):

Brutor AI Platform for AI Agents:

Each agent is scoped to a resource group — Marketing’s agent never touches Engineering’s data, contractor agents never see HR records. Isolation is enforced structurally at the gateway, not by trust.

Per tool: enabled (runs immediately), approval-required (blocks pending human review), or disabled (forbidden). Mark trusted tools auto-approve; gate the actions that need a human in the loop.

Export the whole resource-group config as versioned YAML. Validate, dry-run, apply, rollback. Governance that ships with your code instead of drifting in a wiki.

Refine what “stalling” means or adjust alerting thresholds — publish a new version without breaking anything running. Switch AI models in the gateway without touching agent code.

Once defined, your EMEA team, APAC team, and inside sales all run the same Revenue Sentinel skill — no one rebuilds it from scratch.

See exactly when the agent ran, which records it accessed, how many tokens it consumed, and what it cost — every run, no budget surprises. Access tokens never leave the gateway.

For IT leaders navigating this moment in time — where agents are proliferating faster than governance frameworks can keep up — a control plane like Brutor is what turns agentic AI from a liability into a competitive advantage.