Brutor AI Gateway

Route, secure, and govern all AI traffic — LLMs, MCP servers, and autonomous agents — through one high-performance control plane.

Built differently. Built right.

Every AI traffic type, governed once.

Models, MCP tools, Skills, A2A, Knowledge Bases, Guardrails, Audit — all through the same gateway. No second product to operate, no fragmented governance.

Explore AI Gateway CapabilitiesBuilt in Rust. Zero performance tax.

Lightweight Rust proxy, asynchronous I/O, in-process guardrails. Governance shouldn’t slow your AI down — and with Brutor, it doesn’t.

Learn moreDrop in. No lock-in.

Speak the protocols your stack already uses — OpenAI-compatible API, native MCP, native A2A v1.0. Run it where your security team needs it: on-prem, private cloud, or Brutor SaaS.

Explore standards & compatibilityBrutor AI Gateway capabilities

A tour of the main capabilities the Brutor AI Gateway provides — across three layers: the AI services it routes, the governance it enforces, and the operations it gives IT to run it in production.

The AI services Brutor routes.

Every model, tool, skill, knowledge base, and agent your team relies on — routed through one governed front door.

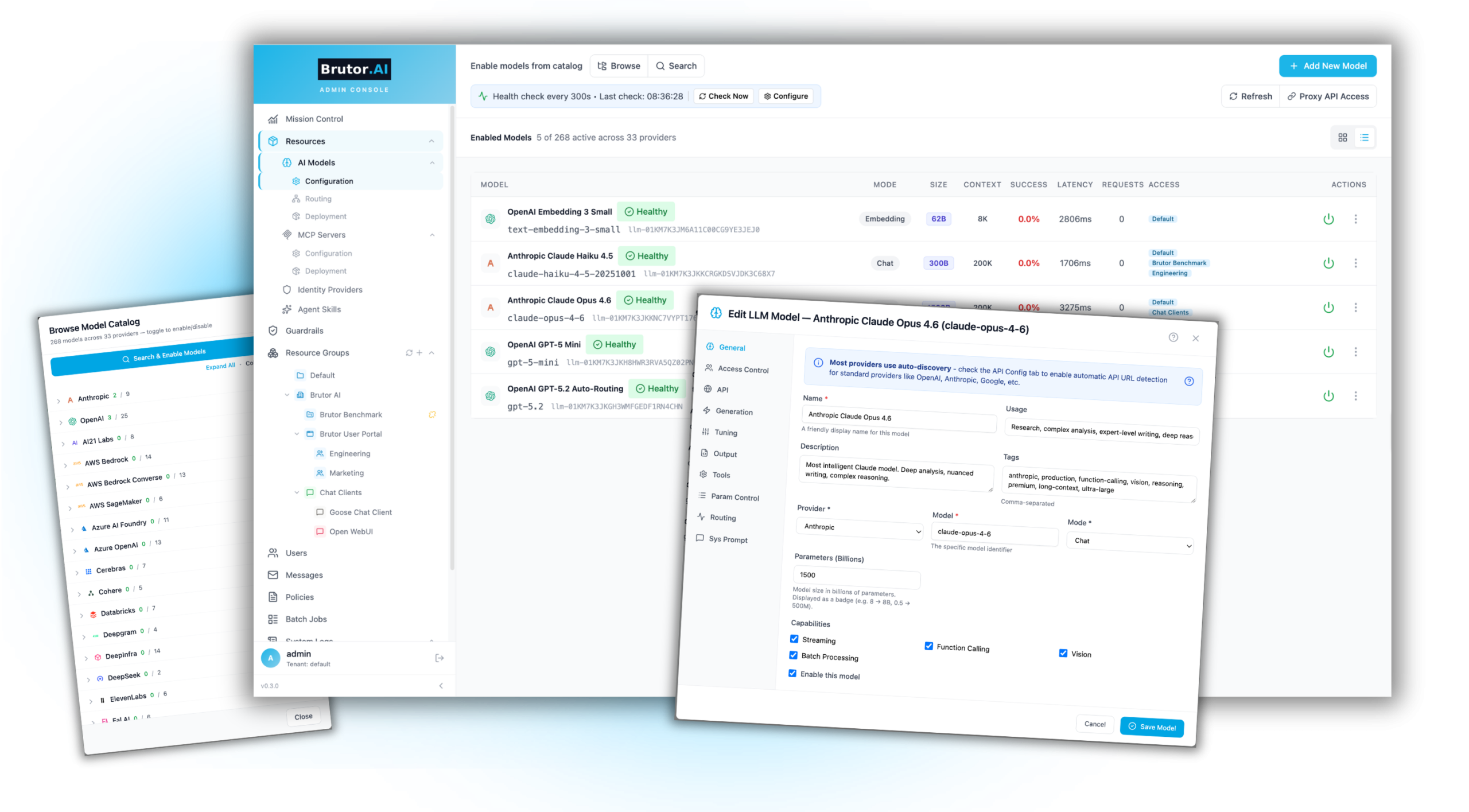

/01/Model Management & Routing

One API for every model and every modality. Switch providers without changing code.

- 300 preconfigured models across all major providers — OpenAI, Anthropic, Google, Azure, Mistral, and self-hosted via Ollama / vLLM / KServe.

- Every modality through one endpoint — text, image (DALL·E), voice (OpenAI TTS), transcription (Whisper), video (Luma) — same governance, audit, and cost rails for all of them.

- Per-model temperature defaults — set temperature, top-p, and max tokens centrally so every team gets consistent model behavior, no per-call tweaking required.

- Routing groups with weighted load balancing, failover, and cost-based routing strategies.

- Model health checks with configurable intervals and automatic unhealthy-model exclusion.

- Batch processing — submit thousands of requests asynchronously at reduced provider cost.

- Secure API key vault — provider keys encrypted at rest, managed centrally, never exposed to users.

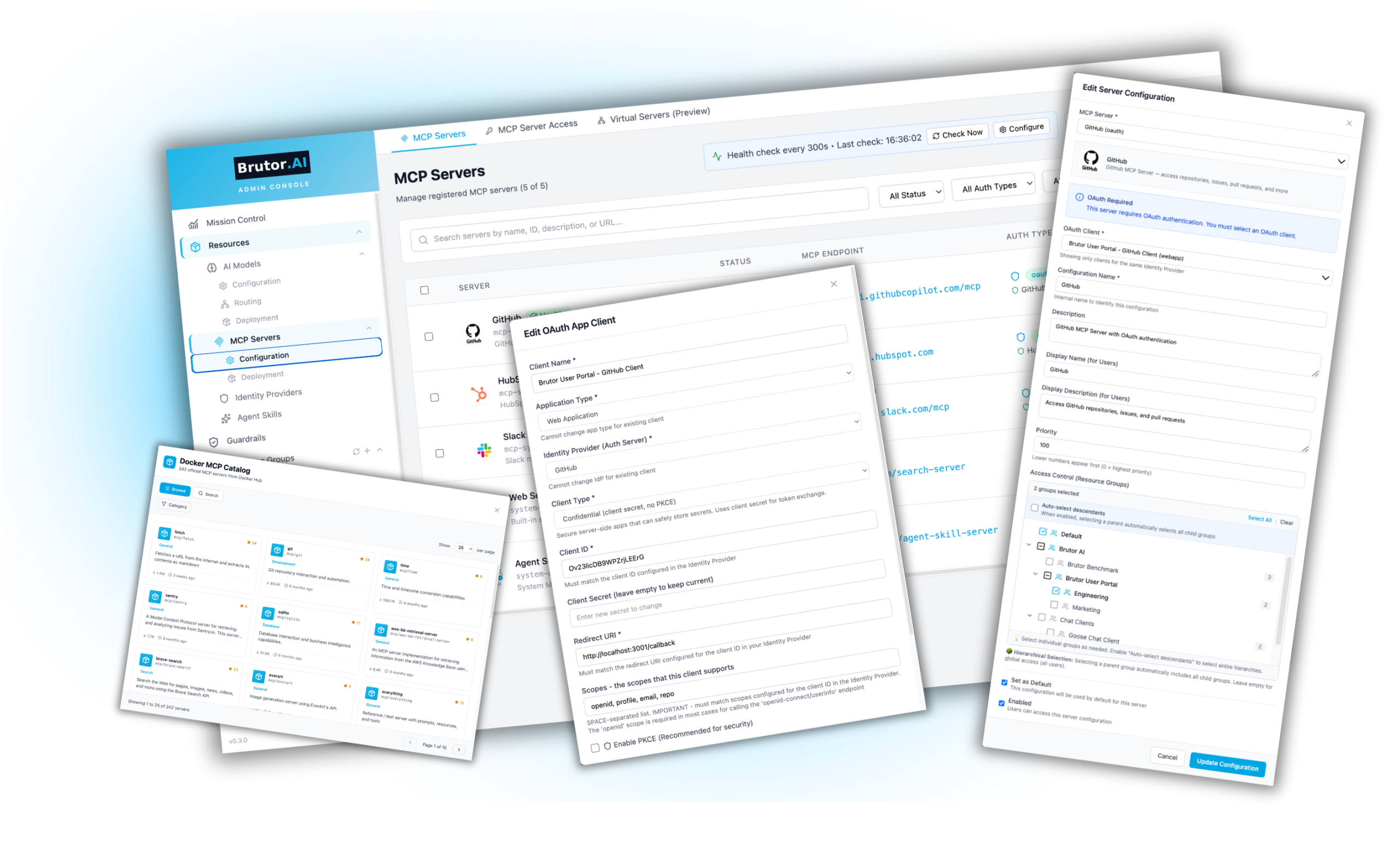

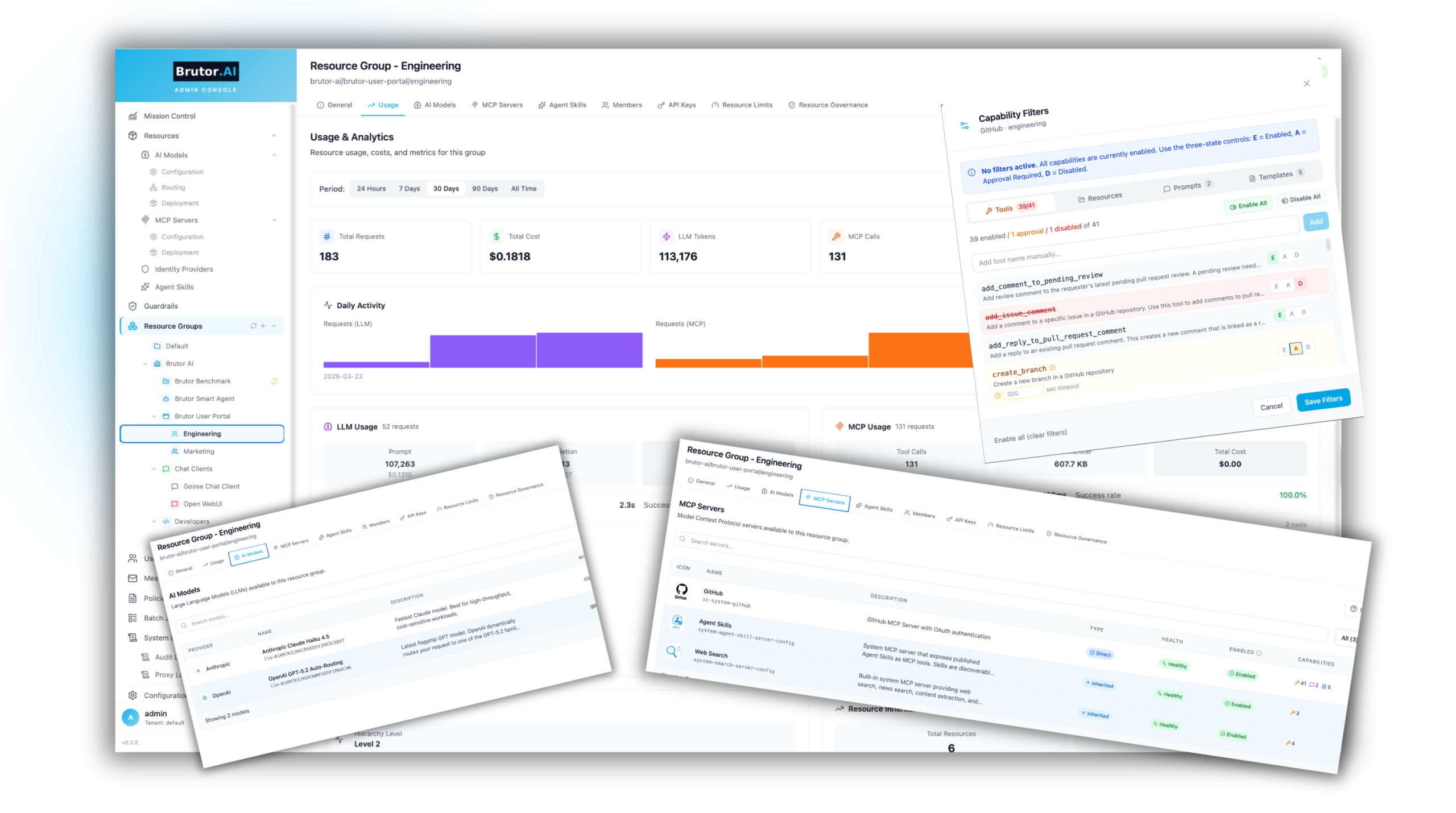

/02/MCP Server Governance

Govern every tool your AI connects to.

- 80+ MCP servers ready out of the box. A curated catalog covering GitHub, HubSpot, Slack, Snowflake, Asana, Atlassian, AWS, MongoDB, Postgres, Tableau, Terraform, Grafana and more — one-click enable, no scripting.

- Containerized images mirrored to Brutor’s own registry so production deployments never depend on an external container registry going down.

- Bring your own — add any Docker image, GitHub repo, or remote MCP URL. Mix containerized and remote servers in one governed catalog.

- OAuth proxy handles authentication automatically for Asana, Atlassian, GitHub, HubSpot and every other OAuth-protected server — access tokens stay encrypted at the Gateway, never exposed to clients.

- Kubernetes-ready for production, with built-in system MCPs for Skills and Web Search included in every deployment.

- Capability filtering per resource group — enable individual tools, resources, and prompts so Marketing only sees HubSpot’s contact-list tools while Engineering gets the full GitHub surface.

- Three-state tool approval per MCP tool — enabled (runs immediately), approval-required (blocks pending human review), or disabled (forbidden). Mark trusted tools auto-approve; gate the actions that need a human in the loop.

- Direct function calling supported alongside MCP — use whichever fits the model.

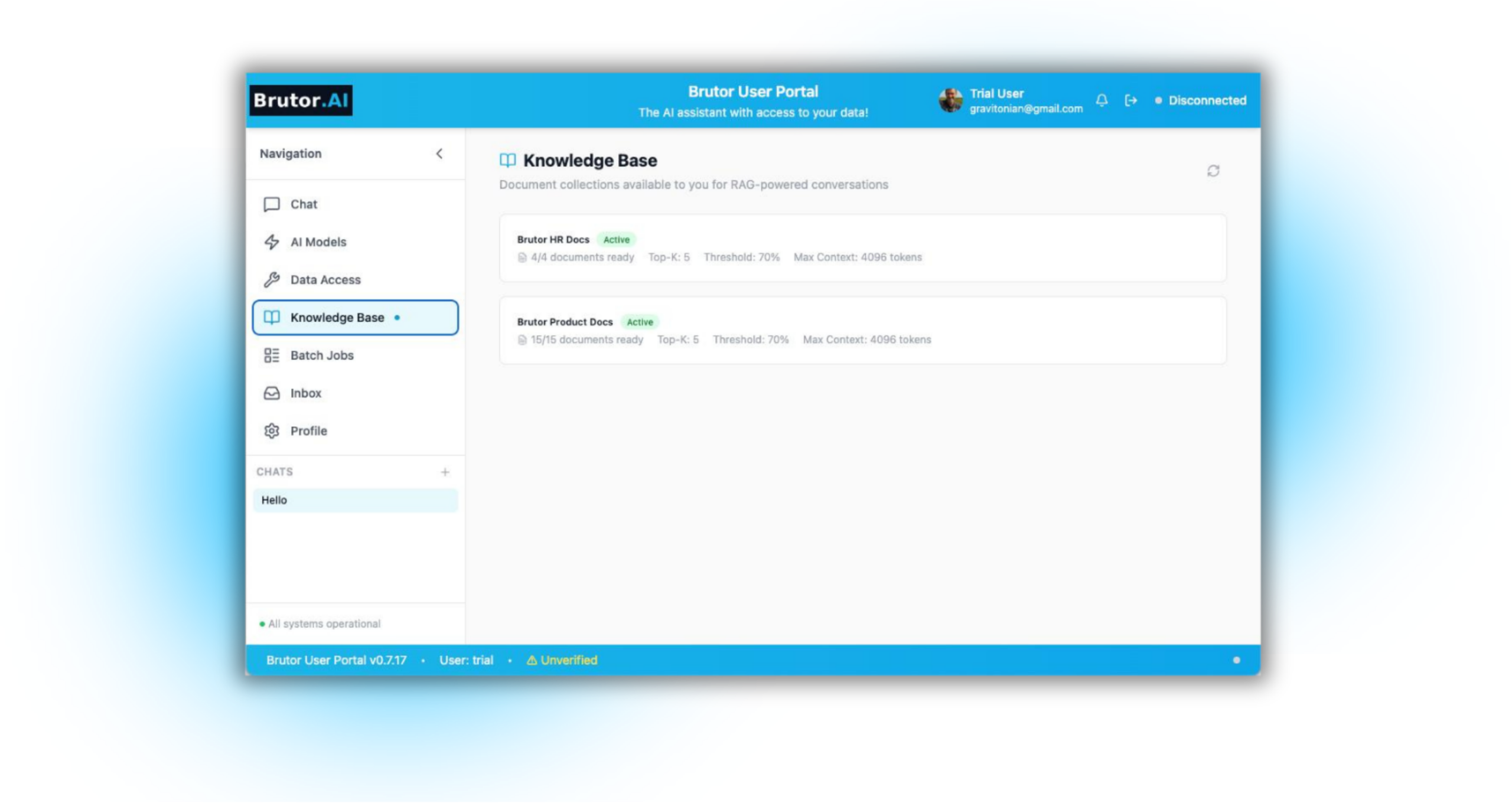

/03/Knowledgebase for Internal Content

Your documents. Your AI. Governed.

- RAG built into the gateway — no separate vector database to operate.

- Upload PDFs, Word docs, markdown, and HTML — documents chunked, embedded, and indexed automatically.

- Per-resource-group knowledgebases — each team, department, or app gets its own isolated corpus.

- Powered by Qdrant — the same vector engine that drives semantic caching.

- Access-controlled retrieval — only users permitted for a group can query that group’s knowledgebase.

- Source citation on every answer — see exactly which documents grounded each response.

- Full audit trail — every retrieval logged with who queried what, when, and which sources were returned.

/04/Skills

Capture your business logic. Let AI execute it.

- Encode your organization’s processes — define skills that capture procedures and domain knowledge.

- Skills implemented as MCP tools — any MCP client can discover and use them.

- Version-controlled with metadata, scripts, templates, and workflow definitions.

- Publish/unpublish controls — skills must be explicitly published before they’re available.

- Per-group assignment — marketing gets marketing skills, engineering gets engineering skills.

- Three-state HITL approval per skill — enabled, approval-required, or disabled. The same approval queue covers MCP tool calls and skill calls; same audit trail.

/05/Agent-to-Agent (A2A) Communication

Govern agent-to-agent traffic through the same Gateway.

- Native A2A v1.0 specification — the full open standard, implemented natively in the Gateway. No vendor extensions.

- Inbound and outbound agent traffic — both directions governed: external agents calling into your organization via the Gateway, and your agents reaching out to external agents.

- Signed Agent Cards — published at

/.well-known/agent-card/{tenant}/{name}, signed with Ed25519 JWS, RBAC-filtered per caller. - Streaming, full task lifecycle, push notifications — SSE token streaming, SUBMITTED → WORKING → COMPLETED state machine, push notification configs per task.

- HMAC-signed delegation chains — multi-hop agent calls carry signed provenance so spoofing is impossible across delegation depth.

- Same RBAC, cost ceilings, and audit as LLM traffic — no second control plane to operate. A2A spend attributes back to the calling team like any other gateway call.

The controls and policies Brutor enforces.

How IT keeps every model call, every tool invocation, and every agent action within the rails — from real-time guardrails to versioned policy bundles.

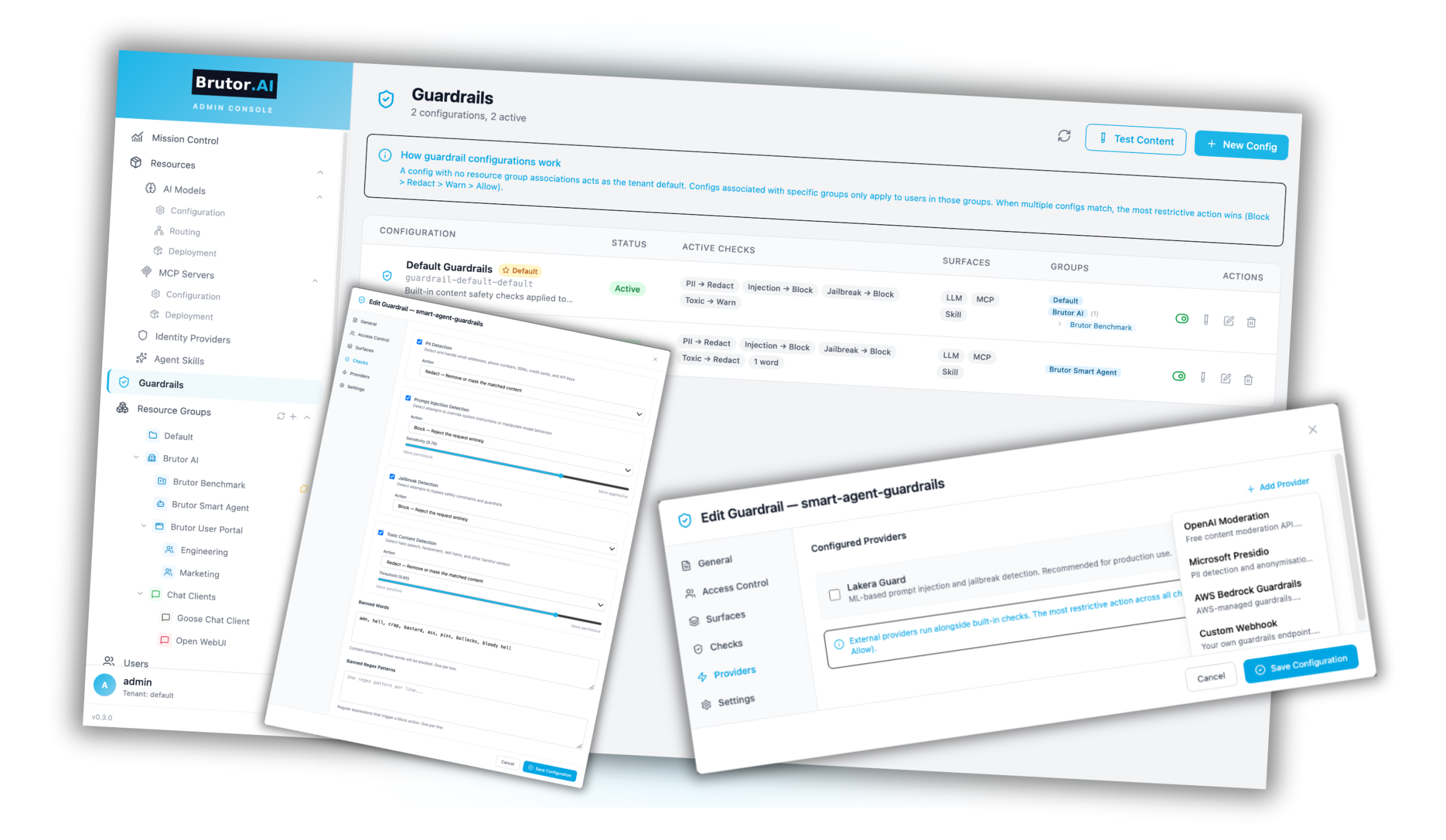

/06/Guardrails & Data Governance

Security baked in. Every request filtered. Every response checked.

- Built-in guardrails enabled by default — PII detection and masking, prompt-injection blocking, jailbreak filtering, toxic-content filtering, banned-word lists.

- Three-state HITL approval per tool, skill, and capability — enabled (runs immediately), approval-required (blocks pending human review), disabled (forbidden). One approval queue covers MCP tool calls and skill calls; same audit trail for human and agent callers alike.

- External guardrail providers — AWS Bedrock Guardrails, Lakera, with most-restrictive-action-wins logic.

- Global or per-group application — different teams can have different security profiles.

- Guard every surface — LLM input/output, MCP input/output, Skill input/output, A2A traffic.

- API key vault — provider credentials stored encrypted, rotatable, never exposed to client-side code.

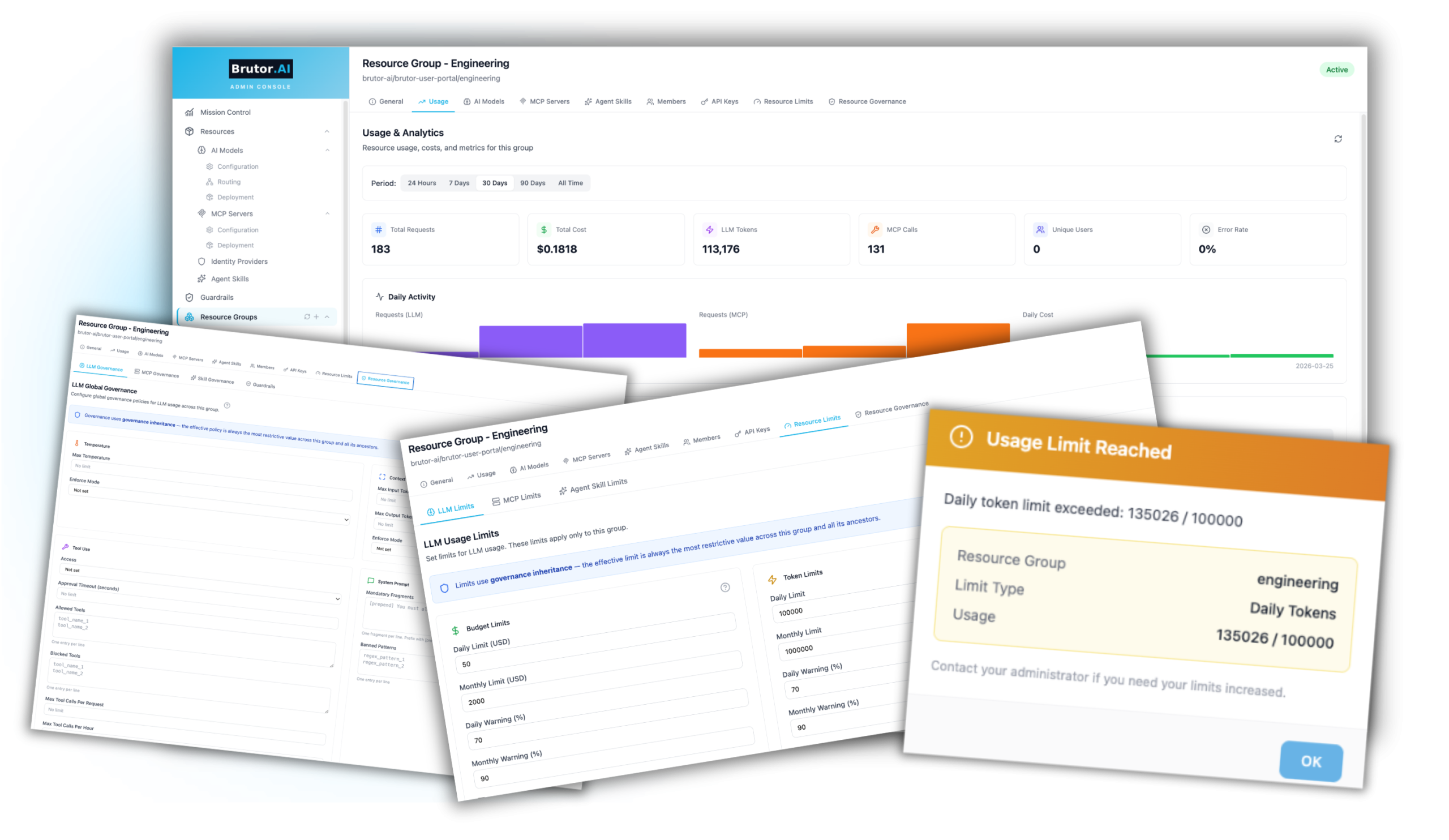

/07/Usage Limits and Policies

Mirror your organization. Govern at every level.

- Hierarchical resource groups — organization → department → team → app → agent.

- Everything scoped to the group — AI models, MCP servers, Skills, Guardrails, Members, API keys, Limits, Governance.

- Resource inheritance — child groups inherit resources from parents unless set to standalone (additive).

- Governance inheritance — the effective policy is always the most restrictive value across the group and all its ancestors.

- Policy-as-code export. Export any resource group’s complete configuration as versioned policy-as-code. Edit, review, and re-apply a new version when the setup evolves.

- Per-group usage dashboards — requests, cost, and tokens with model-level and tool-level breakdown.

- 12+ RBAC roles with granular permissions — create custom roles for any access pattern.

- Two authentication paths — Gateway users (portal login) and API keys (agent/app access) kept separate.

/08/Audit & Compliance — Policy-as-Code

Built to support your compliance journey — from EU AI Act to HIPAA.

- Complete audit trails — every admin action, AI interaction, and tool call logged with who, when, and what.

- Proxy logs with full request/response bodies — filterable by user, group, model, server, and date range.

- Drill-down from summary views to individual interaction detail.

- Policy-as-Code lifecycle — export any resource group’s complete configuration as versioned YAML; validate → dry-run → apply → rollback. Governance ships with your code instead of drifting in a wiki.

- EU AI Act Article 9 support — guardrails, resource groups, and access controls enforce risk-management policies as code.

- EU AI Act Article 12 support — traceability and automatic record-keeping with 6-month log retention.

- Infrastructure for HIPAA, SOC 2, and GDPR compliance — data governance, access controls, and audit trails your compliance team needs.

Running the gateway in production.

Cost rails, caches, observability — what IT and FinOps need to keep AI traffic fast, cheap, and visible.

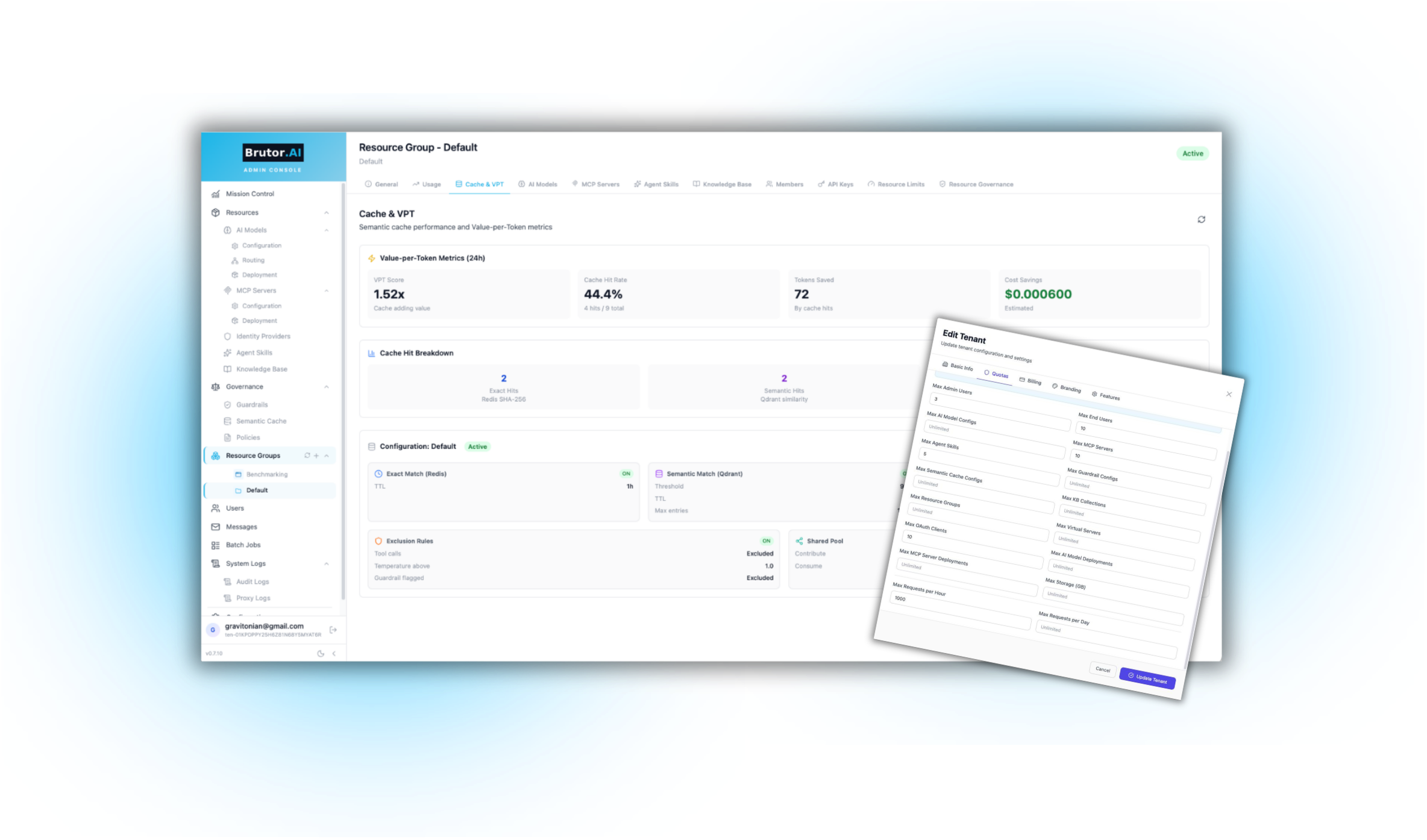

/09/Caches

Your fastest, cheapest LLM call is the one you never make.

- Two-layer caching — Redis for exact-match lookups, Qdrant vector store for semantic similarity matches.

- Semantic matching — recognizes when reworded prompts ask the same thing, not just byte-identical queries.

- Per-resource-group isolation — one group’s answers never leak into another group’s responses.

- Configurable similarity thresholds per group — tune the trade-off between hit rate and answer precision.

- Configurable TTLs — from minutes for fast-moving data to days for stable reference content.

- Surfaced in Mission Control — cache hit rates, cost saved, and latency improvements visible in real time.

/10/Cost Control & FinOps

Stop runaway invoices. Then cut the bills that do remain.

- Per-group budget limits — daily dollar caps, token limits, request limits for both LLM and MCP.

- Real-time enforcement — exceed a limit and the next request is blocked (HTTP 429).

- Batch processing support — route non-urgent workloads through provider batch APIs at up to 50% lower cost.

- Semantic cache — exact-match plus similarity caching across the AI surface. Same question worded differently? Cached response. Typically 20–40% off token spend.

- Smart model routing — route each call to the cheapest model that meets policy. Premium tiers reserved for queries that actually need them. Typically 30%+ savings.

- Usage dashboards at every level — organization-wide, per-team, per-model, per-tool.

- Cost attribution — see exactly which group, user, or API key generated each cost.

- Webhook alerts for proactive budget management — send budget-threshold notifications to any endpoint you already monitor.

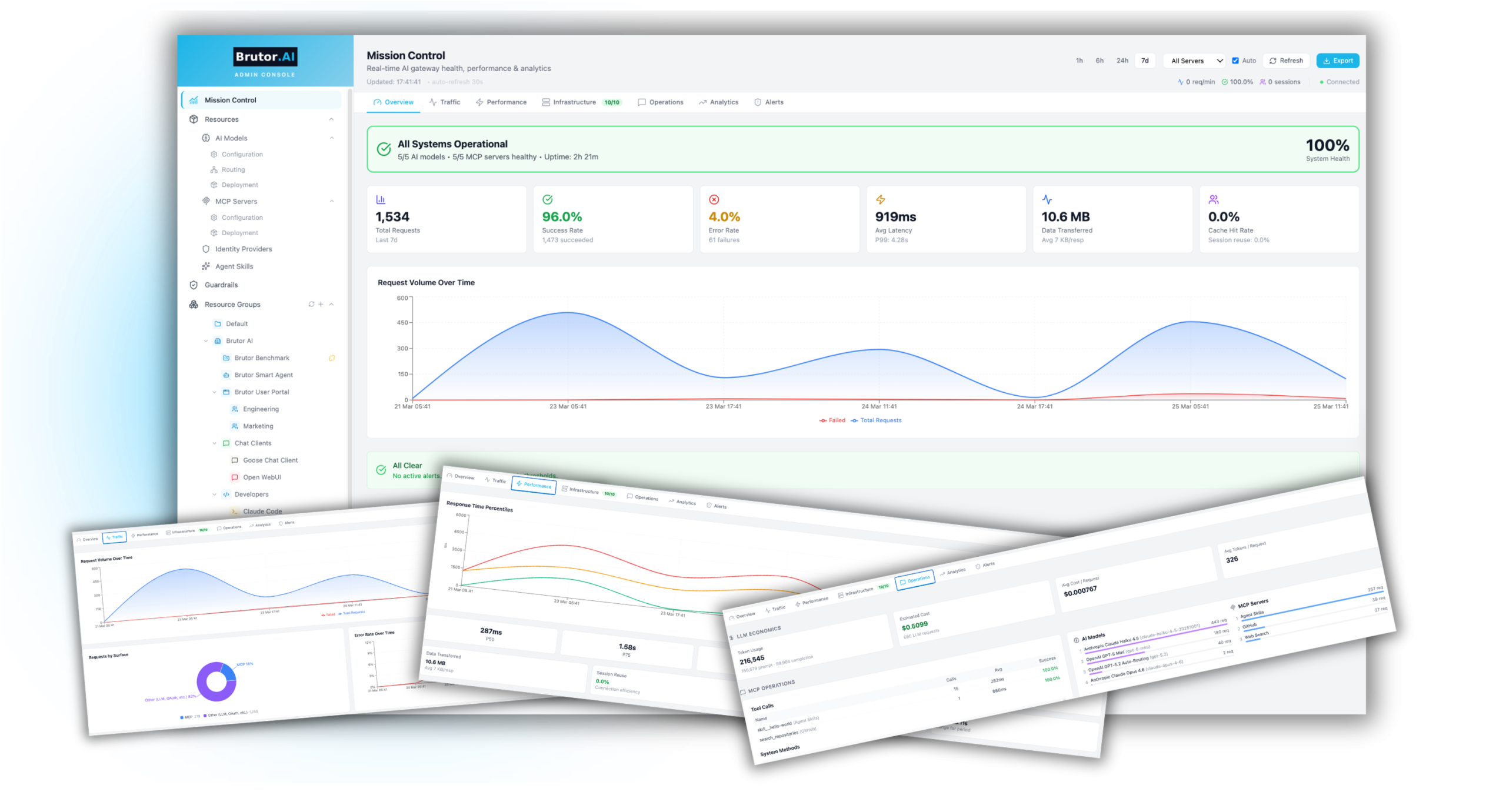

/11/Observability & Mission Control

Real-time dashboards. Complete audit trails. Nothing hidden.

- Mission Control dashboard — requests over time, success rates, P99 latency, data transfer, cache stats.

- Infrastructure health — real-time status of every MCP server and AI model, reachable or not.

- LLM economics — token costs, average cost per request, model-level cost comparison.

- Alert system — usage-limit breaches, unhealthy models, with acknowledgement workflow.

- Bring your own observability stack — native OpenTelemetry hooks plug into Prometheus, Grafana, Loki, or any OTel-compatible backend you already run.

The full enterprise pipeline. No detectable latency cost.

Every request through Brutor passes auth, RBAC, model routing, governance, cost tracking, quota enforcement, guardrails, and semantic cache lookup — measured against the same models called directly. The answer is between 0 and 53 ms at median.

Note: the test was run by Brutor AI staff in May 2026 using its own benchmarking tool that ships with the proxy. The full methodology, raw numbers, and the “run it yourself” instructions are in the linked benchmark report — including caveats about small-N stats, localhost loopback, and Apple-Silicon test hardware.

Drop in. No lock-in.

Brutor speaks the protocols your stack already uses. Switch the AI infrastructure beneath your apps without rewriting clients, without inheriting proprietary endpoints, and without giving up the deployment flexibility your security team needs.

Drop-in replacement for any OpenAI client. Same SDKs, same wire format. Point at Brutor’s URL and ship.

Full Model Context Protocol support — tools and resources, with stateful session management and multi-server aggregation. Works with every MCP-compliant client (Claude Code, Goose, IDE plugins).

First-class Agent-to-Agent protocol with signed agent cards, HMAC delegation chains, full task lifecycle. Same governance as LLM traffic.

On-prem · private cloud · Brutor SaaS. Customer-owned data, customer-controlled deployment.

AI is everywhere in your organization — your control over it will be too.

Spin up a trial in minutes, or book a 30-minute demo with our team.